My apologies if you were expecting something spicy about the seats in this update – this one’s about modelling and methodology. But I do think it’s interesting, and hopefully some of you will too. A story here: this was something I actually did work on for the 2020 general election (as well as GE 2016 and LE 2019) but never managed to complete the modelling for. So there’s a personal interest in seeing how it might have gone, as well as the exercise of using this information to improve the model.

One of the challenges with the model as it stands is that it currently operates without context on polling from before GE 2020. One of the suggestions I received on Twitter was to see how well the model would have projected GE 2020 based on GE 2016 numbers and pre-election polling.

This is a useful way of testing various parts of the model, though there are immediate issues with it that mean a direct 1:1 comparison is problematic, such as the late Sinn Féin surge, the expansion of the Social Democrats, and the creation of Aontú, and the fact that it’ll be dealing with swing from 2016, which was a huge year for Independents/Others.

There is still value here to help refine the model, and it’s something I’m going to be working on over the coming weeks, with the slog of data entry being the main challenge. However, even from doing the very initial pieces, there’s some interesting stuff.

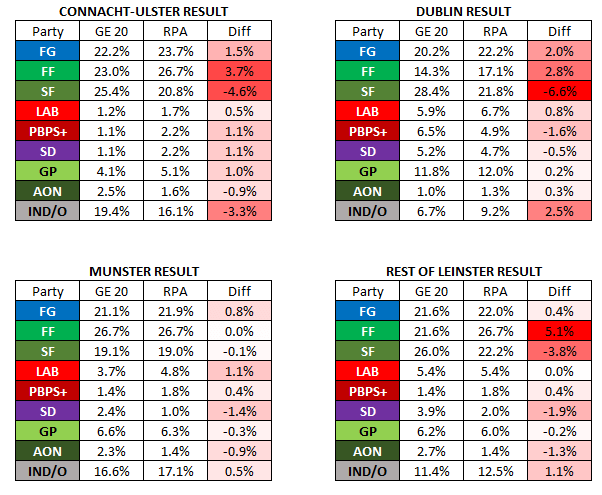

Below is a comparison of the GE 2020 results to what the RPA would have projected in each region, had I been running the model prior to that GE.

This is, in general, an indication that the RPA is pretty accurate. That shouldn’t be surprising, as Ireland generally has quite high-quality polling, so that’s more a credit to the pollsters than it is to the model.

There are a few observations worth making from this:

- The model could not adapt quickly enough to the Sinn Féin surge, which only really became apparent in January 2020, less than a month before the election. This didn’t just impact the SF numbers, but everyone who lost voters to them, most significantly FF.

- Independent polling is messy, as expected; their results in polling are very volatile.

- The model had detected an SD boost in Connacht-Ulster offset by declines elsewhere. This analysis shows that there may be a small systemic problem at play here, rather than a true reflection.

- Conversely, PBP/Solidarity are underpolled in Dublin and overpolled elsewhere, but this pattern is also something the model is detecting, so this might also be a systemic issue.

- Fine Gael and Labour are overpolled in general, though for FG this did decline in polls closer to polling day.

- Aontú were relatively hard to predict, which is not surprising as they were a new party with a very limited number of candidates.

- The RPA model does a good job of smoothing out outlier polls, like when B&A had FF on 49% in Munster in January.

- Speaking of, polling in Munster averaged out as being more accurate than everywhere else. Not sure what to make of that, but there you go!

There are questions here I need to work on answering, which is good. For example, how can the model adapt to a sudden change in polling pre-election? How much can a constituency deep-dive help improve accuracy with Independents? How can I adjust the Social Democrat figures without moving too far from data? Does increased public familiarity with Aontú breed more accurate polling?

I’ll be using this method to do a full retrospective constituency and seat analysis from 2020, which should help inform improving the model in specific constituencies. The main body of work on this still needs to be done, requiring pieces on swings from 2016 to 2020, projected FPV, projected transfers, and finally, seat allocation. The last part will be the most interesting, but for now this is a fairly encouraging start, both from indicating that the model is broadly going in the right direction, and for helping identify a number of specific issues to work on improving.